OCI: Logging Object Events with the Streaming Service

There are two functionalities in OCI that we can leverage in order to support logging - Streaming and Events service.

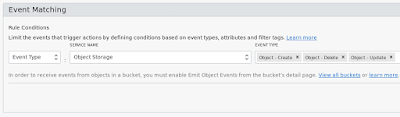

With the events service we can define on which events to match whereby you specify a service name and the corresponding event types. So for object storage, we log events based on create, update and delete:

The next part is that we can define the action type, with three possible options:

The next step is that we need to define an IAM policy so that cloud events can leverage this streaming functionality. So, head over to IAM and create a new policy with the text:

allow service cloudEvents to use streams in tenancy

You will want this policy in your root compartment.

Now, we can go ahead and create our event logging. Back over at Events Services (Application Integration -> Event Service), create a new rule. I called mine "StreamObjectEvents".

In the action, you want to specify action type as streaming and the specific stream events should go into. It should look like this:

With all that set up, go ahead and perform some operations on your bucket. Once done, head back over to your stream, and refresh the events, and you should see new rows in there.

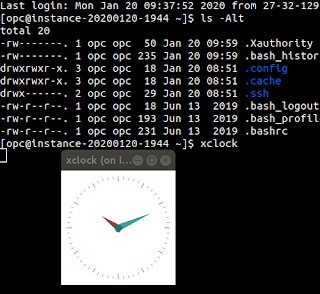

Now that all the pieces are in place, it's time to figure out how we'll consume this data. In this example I'll be creating a bash script. It's a simple 3 part process:

Step 1 - We need to determine our stream OCID.

So here, I have my compartment ID set in an environment variable named "TS_COMPART_ID" and I want to get the stream with the name ObjLog.

Step 2 - Create a cursor

Streams have a concept of cursors. A cursor tells OCI what data to read from the stream, and a cursor survives for 5 minutes only. There are different kinds of cursors and the documentation kindly lists 5 types of cursors for us:

My code looks like this:

Basically, I'm saying here I will want to get any events that occured since the last hour.

Step 3 - Reading and reporting the data

Now we have all the pieces, we can consume the data in our log. One note is that I think it would be better if this event data actually returned the user performing the action from an auditing point of view. Maybe it will be added in the future.

Also note that the data is encoded in base64, so we first need to decode it which returns JSON in a data structure that resembles the following:

So, I iterate and output the data like so

I place this code on GitHub so you can see the complete code:

https://github.com/tschf/oci-scripts/blob/master/objlog.sh

With the events service we can define on which events to match whereby you specify a service name and the corresponding event types. So for object storage, we log events based on create, update and delete:

The next part is that we can define the action type, with three possible options:

- Streaming

- Notifications

- Functions

The next step is that we need to define an IAM policy so that cloud events can leverage this streaming functionality. So, head over to IAM and create a new policy with the text:

allow service cloudEvents to use streams in tenancy

You will want this policy in your root compartment.

Now, we can go ahead and create our event logging. Back over at Events Services (Application Integration -> Event Service), create a new rule. I called mine "StreamObjectEvents".

In the action, you want to specify action type as streaming and the specific stream events should go into. It should look like this:

With all that set up, go ahead and perform some operations on your bucket. Once done, head back over to your stream, and refresh the events, and you should see new rows in there.

Now that all the pieces are in place, it's time to figure out how we'll consume this data. In this example I'll be creating a bash script. It's a simple 3 part process:

Step 1 - We need to determine our stream OCID.

oci streaming admin stream list \

--compartment-id $TS_COMPART_ID \

--name ObjLog \

| jq -r '.data[].id'

So here, I have my compartment ID set in an environment variable named "TS_COMPART_ID" and I want to get the stream with the name ObjLog.

Step 2 - Create a cursor

Streams have a concept of cursors. A cursor tells OCI what data to read from the stream, and a cursor survives for 5 minutes only. There are different kinds of cursors and the documentation kindly lists 5 types of cursors for us:

- AFTER_OFFSET

- AT_OFFSET

- AT_TIME

- LATEST

- TRIM_HORIZON

My code looks like this:

oci streaming stream cursor create-cursor \

--stream-id $objLogStreamId \

--type AT_TIME \

--partition 0 \

--time "$(date --date='-1 hour' --rfc-3339=seconds)" \

| jq -r '.data.value'

Basically, I'm saying here I will want to get any events that occured since the last hour.

Step 3 - Reading and reporting the data

Now we have all the pieces, we can consume the data in our log. One note is that I think it would be better if this event data actually returned the user performing the action from an auditing point of view. Maybe it will be added in the future.

Also note that the data is encoded in base64, so we first need to decode it which returns JSON in a data structure that resembles the following:

{

"eventType": "com.oraclecloud.objectstorage.updateobject",

"cloudEventsVersion": "0.1",

"eventTypeVersion": "2.0",

"source": "ObjectStorage",

"eventTime": "2019-10-02T01:35:32.985Z",

"contentType": "application/json",

"data": {

"compartmentId": "ocid1.compartment.oc1..xxx",

"compartmentName": "education",

"resourceName": "README.md",

"resourceId": "/n/xxx/b/bucket-20191002-1028/o/README.md",

"availabilityDomain": "SYD-AD-1",

"additionalDetails": {

"bucketName": "bucket-20191002-1028",

"archivalState": "Available",

"namespace": "xxx",

"bucketId": "ocid1.bucket.oc1.ap-sydney-1.xxx",

"eTag": "bdef8e2e-fa20-4889-8cdc-fc1cb7ee5e3b"

}

},

"eventID": "e8e5ef3b-1a98-4bf7-4e47-2827f517feae",

"extensions": {

"compartmentId": "ocid1.compartment.oc1..xxx"

}

}

So, I iterate and output the data like so

tabData="eventType\teventTime\tresourceName\b"

for evtVal in $(oci streaming stream message get \

--stream-id $objLogStreamId \

--cursor $cursorId \

| jq -r 'select(.data != null) | .data[].value' \

)

do

evtJson=$(echo $evtVal | base64 -d)

evtType=$(echo $evtJson | jq -r '.eventType')

evtTime=$(echo $evtJson | jq -r '.eventTime')

resourceName=$(echo $evtJson | jq -r '.data.resourceName')

line=$(printf "%s\t%s\t%s" "$evtType" "$evtTime" "$resourceName")

tabData+="$line\n"

done

printf "$tabData" | column -t

I place this code on GitHub so you can see the complete code:

https://github.com/tschf/oci-scripts/blob/master/objlog.sh